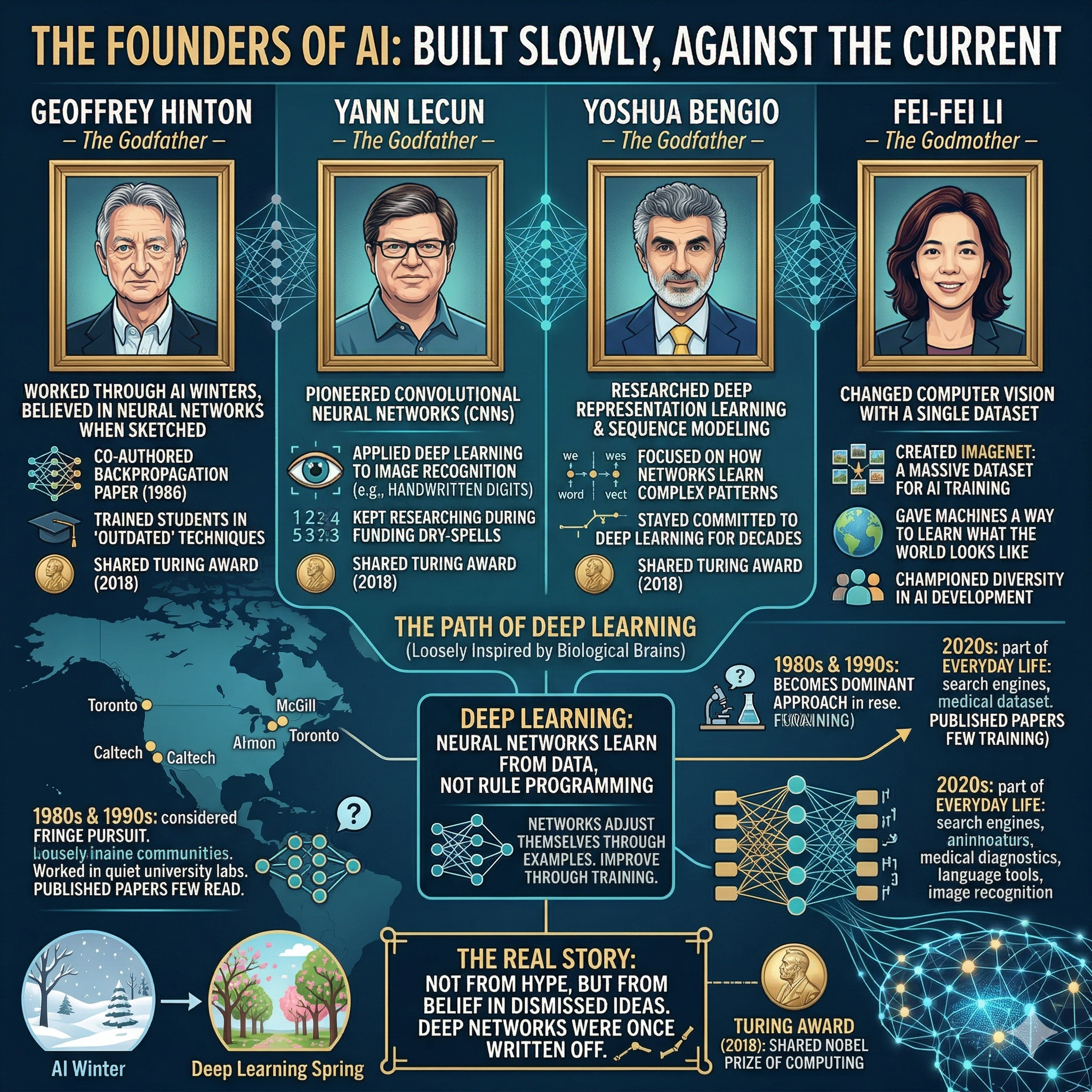

There is a version of the artificial intelligence story that some may say began with ChatGPT, or perhaps with the smartphone, or even with science fiction. But the real story starts much earlier, in quiet university offices, underfunded labs, and research departments that most of the world had written off as a dead end.Four people, more than almost anyone else, are responsible for the AI systems that now power search engines, medical diagnostics, language tools, and image recognition. Three of them are known as the Godfathers of AI. One is called its Godmother. Together, Geoffrey Hinton, Yann LeCun, Yoshua Bengio, and Fei-Fei Li built the intellectual foundations of a technology that has changed how the world works.

What makes their stories worth telling is not just what they discovered. It is how long they believed in ideas that most people around them did not take seriously. Through decades often described as the “AI winters,” when funding dried up and researchers abandoned the field for more practical pursuits, this group kept working. At this time, they published papers that few people read, trained students in techniques the broader community considered outdated, and asked questions that most of their peers found either too abstract or too ambitious.The technology they helped create, broadly called deep learning, is built on artificial neural networks, computational systems loosely inspired by how biological brains process information.

These networks learn from data. Instead of being programmed with rules, they adjust themselves by exposure to examples, improving through a process called training. In the 1980s and 1990s, this idea was considered a fringe pursuit. By the 2010s, it had become the dominant approach in AI research. By the 2020s, it had become part of everyday life.In 2018, Hinton, LeCun, and Bengio shared the Turing Award, often described as the Nobel Prize of computing, for their decades of foundational work.

Meanwhile, Li had already changed the course of computer vision years earlier with a single dataset that gave machines something they had never really had before: a way to learn what the world looks like.These are not figures who emerged suddenly at a moment of hype. They are people who built something slowly, carefully, and in many cases against the current. Their individual journeys tell four different versions of the same story, which is that the ideas others dismiss often turn out to matter most.

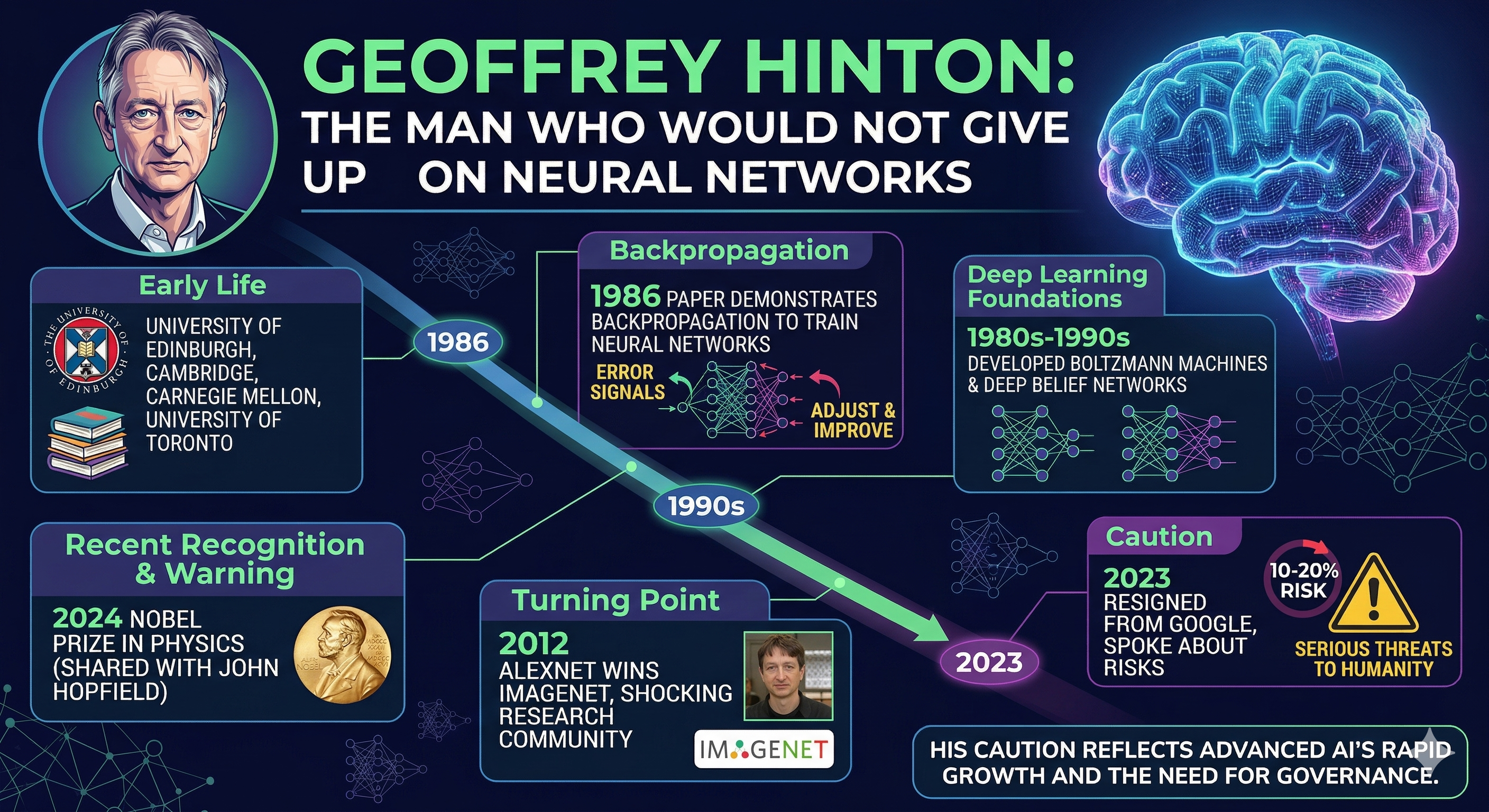

Geoffrey Hinton: The man who would not give up on neural networks

Geoffrey Everest Hinton was born in Britain in 1947 and spent much of his early career trying to convince a sceptical scientific community that brains, and machines that mimic them, held the answer to artificial intelligence. He earned his PhD from the University of Edinburgh and later from the University of Edinburgh and Cambridge, eventually settling at Carnegie Mellon and then the University of Toronto, where he would do some of his most influential work.

Hinton’s core belief was that machines could learn to represent the world through layered, interconnected nodes, much the way neurons in the brain form connections. In 1986, he co-authored a paper with David Rumelhart and Ronald Williams that demonstrated how backpropagation could train multilayer neural networks. The algorithm worked by sending error signals backward through a network so it could adjust itself and improve.

This paper became one of the most cited works in computer science history, though at the time of its publication, it attracted rare attention.Through the 1980s and into the 1990s, Hinton continued developing ideas that the mainstream field largely set aside in favour of other approaches. He worked on Boltzmann machines, a type of probabilistic network, and later on deep belief networks, which helped solve a persistent problem in training deep systems. In 2012, a student of his, Alex Krizhevsky, built a neural network called AlexNet that won the ImageNet image recognition competition by a margin large enough to shock the research community. The result was a turning point for the entire field of AI.In 2024, Hinton received the Nobel Prize in Physics, shared with John Hopfield, for work that had helped lay the theoretical groundwork for machine learning with artificial neural networks.

By then, however, Hinton had already made a different kind of news. In 2023, he resigned from Google and began speaking openly about risks he believed were growing alongside AI’s capabilities. He estimated a 10-20% probability that advanced AI could pose serious threats to humanity within the coming decades. For a man who had spent his life building the technology, the warning carried weight.His turn toward caution was not a rejection of his life’s work but a recognition that the systems he helped create had grown faster and more capable than he had expected, and that the world was not ready to govern them.

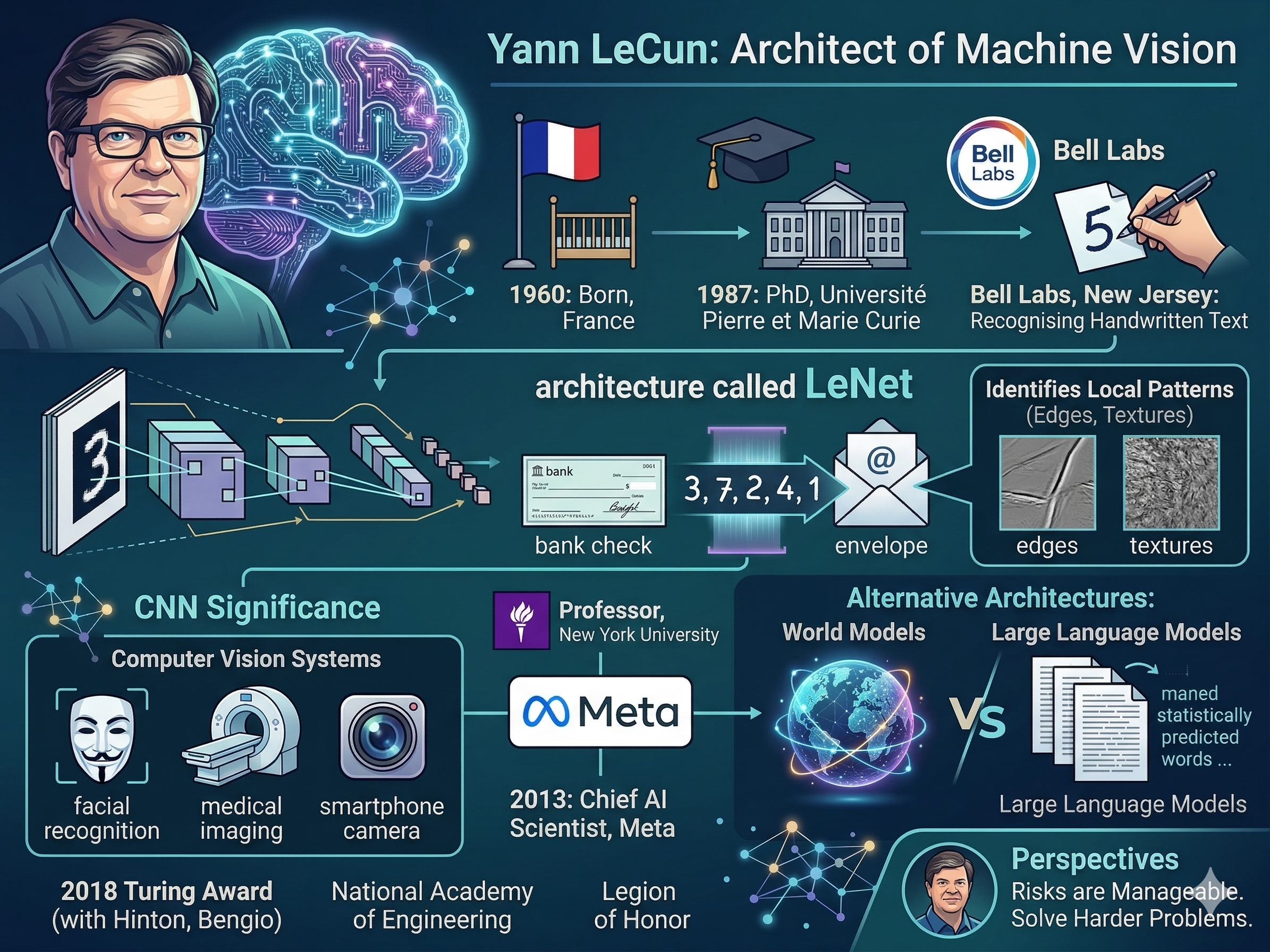

Yann LeCun: The architect of machine vision

Yann LeCun was born in France in 1960 and came to deep learning from a background in mathematics and engineering. After completing his PhD at the Université Pierre et Marie Curie in 1987, he joined Bell Labs in New Jersey, where he worked on one of the most practically important problems in AI: teaching machines to recognise handwritten text.The result of that work was an architecture called LeNet, a convolutional neural network designed to identify handwritten digits.

The key insight behind convolutional neural networks, or CNNs, was that images contain local patterns, edges, textures, shapes, and that a network should look for those patterns wherever they appear rather than treating each pixel in isolation.

This made the networks far more efficient and more effective at understanding visual information. By the late 1990s, LeNet was being used by banks and postal services to read handwritten numbers on cheques and envelopes.The significance of that work only became clear much later. CNNs are now the architecture behind most computer vision systems in use today, from facial recognition to medical imaging to smartphone cameras. LeCun had built the foundation before most of the industry knew it needed one.He went on to become a professor at New York University and, in 2013, took a role as Chief AI Scientist at Meta, where he has remained while continuing his academic work.

More recently, LeCun has argued that current large language models, despite their impressive output, do not represent a path to true machine intelligence. He has proposed alternative architectures and ideas around what he calls world models, systems that learn a structured internal understanding of how the world works rather than predicting text statistically.LeCun shared the 2018 Turing Award with Hinton and Bengio. He has been elected to the National Academy of Engineering and received the Legion of Honor from the French government. Where Hinton has become a cautionary voice, LeCun has positioned himself as someone who believes the risks of current AI are manageable, and that the field needs to solve harder problems before it needs to worry about existential ones.

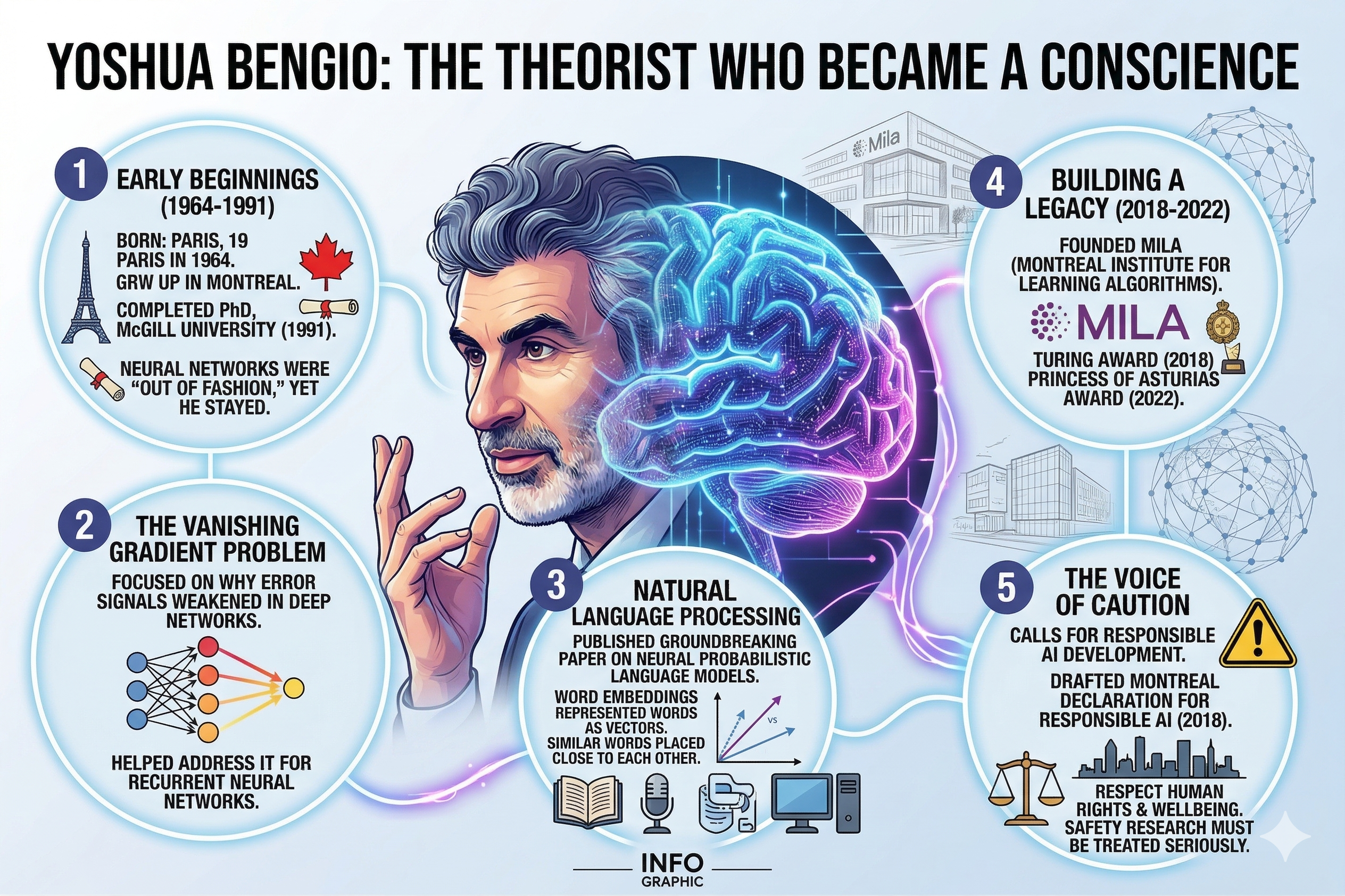

Yoshua Bengio: The theorist who became a conscience

when neural networks were deeply out of fashion. Most of the research community had moved toward other methods. Bengio stayed with neural networks anyway.His early work focused on a problem that made deep networks difficult to train: the vanishing gradient problem.

During backpropagation, error signals weakened as they travelled backwards through many layers, making it hard for deep networks to learn from distant patterns.

Bengio worked on understanding why this happened and how to address it. His research on recurrent neural networks and sequence modelling, networks designed to handle data that unfolds over time, such as language or speech, helped lay the groundwork for natural language processing.One of his most cited contributions came in 2003, when he and his colleagues published a paper on neural probabilistic language models. The paper introduced the concept of word embeddings, a way of representing words as vectors in a mathematical space where similar words are placed close together. This idea became central to how machines process language and directly influenced the development of the large language models that power AI assistants today.Bengio founded the Montreal Institute for Learning Algorithms (MILA), which has grown into one of the world’s leading AI research institutes. He shared the 2018 Turing Award and, in 2022, received the Princess of Asturias Award for Technical and Scientific Research.In recent years, Bengio has become one of the most prominent voices calling for caution in AI development. He helped draft the Montreal Declaration for Responsible AI in 2018, which set out principles for developing AI in ways that respect human rights and wellbeing.

He has argued that AI developers carry responsibilities beyond technical performance, that the systems they build will affect every part of society, and that safety research must be treated as seriously as capability research.

His position has sometimes put him at odds with the pace of commercial deployment, but he has continued to speak plainly about the risks he sees.

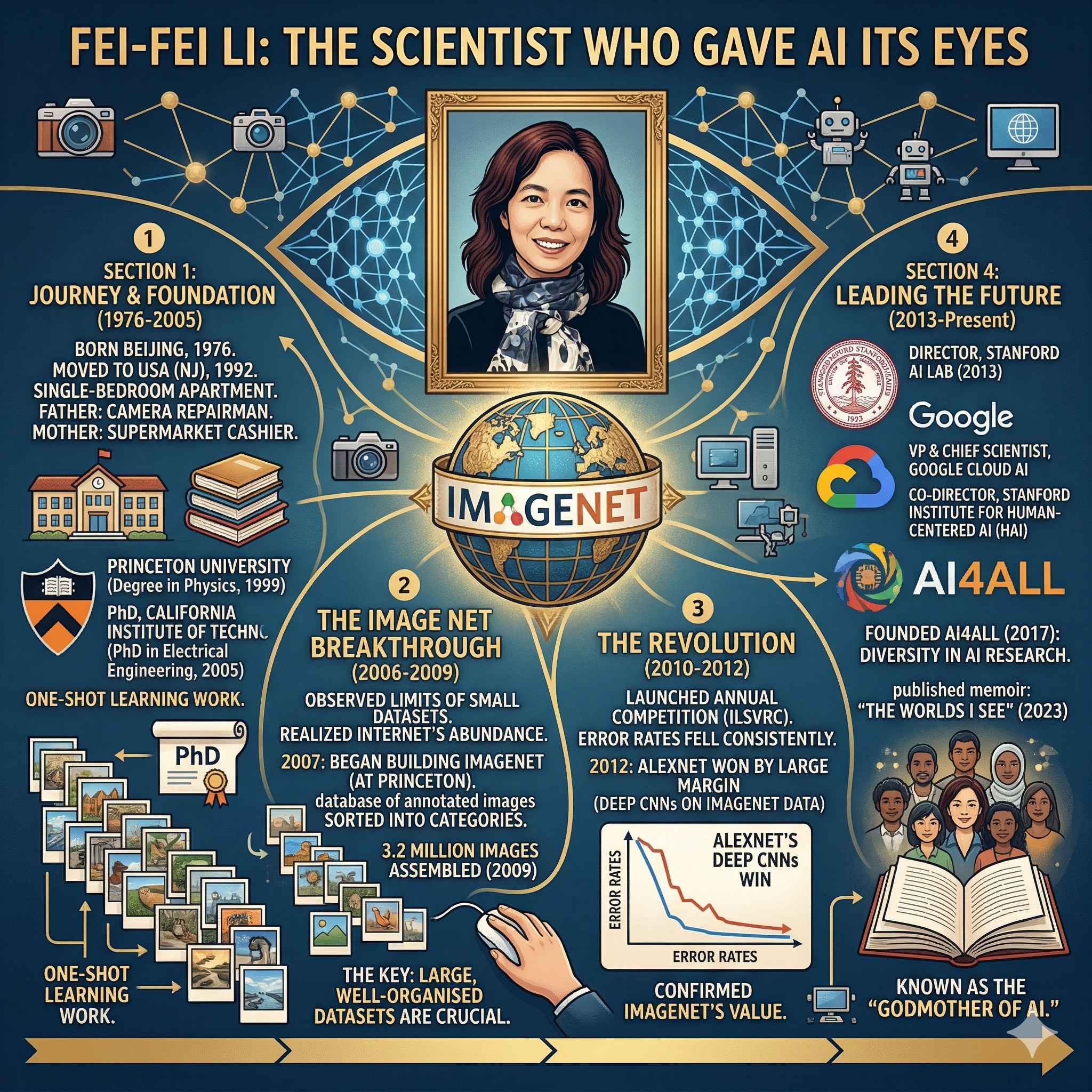

Fei-Fei Li: The scientist who gave AI its eyes

Fei-Fei Li was born in Beijing in 1976 and moved to the United States with her family when she was 16.

The family settled in New Jersey, living in a single-bedroom apartment while her parents worked to rebuild their lives, her father as a camera repairman and her mother as a supermarket cashier. Li worked part-time through school, attended Parsippany High School, and earned a scholarship to Princeton University, where she completed a degree in physics in 1999. She went on to do her doctoral work at the California Institute of Technology, graduating in 2005 with a PhD in electrical engineering.

During her doctoral studies, Li worked on a method called one-shot learning, which enables AI systems to recognise new categories from very few examples. But her contribution that changed the field most came later, through a project she began thinking about in 2006, when she was teaching at the University of Illinois at Urbana-Champaign.Li had observed that AI systems trained on small image datasets performed poorly because they had seen too little of the world’s visual variety.

The internet, she realised, contained an abundance of images that had never been systematically organised for machine learning. In 2007, after joining Princeton’s computer science faculty, she began building ImageNet, a database of annotated images sorted into categories that reflected how humans actually describe and organise what they see.By 2009, the team had assembled and annotated 3.2 million images.

They published their findings and, the following year, launched an annual competition inviting researchers to train algorithms on the ImageNet dataset. The competition, called the ImageNet Large Scale Visual Recognition Challenge, ran each year and tracked how accurately different systems could classify images. Error rates fell consistently. When AlexNet won the 2012 edition by a large margin, using deep convolutional neural networks trained on the ImageNet data, it confirmed what Li had suspected: large, well-organised datasets were as important as the algorithms themselves.Li joined Stanford University in 2009 and became director of its AI laboratory in 2013. She later took a role as vice president at Google and chief scientist for Google Cloud’s AI and machine learning division before returning to Stanford in 2019 as co-director of the Stanford Institute for Human-Centered Artificial Intelligence.Li has been vocal about the need for diversity in AI research and development. In 2017, she co-founded AI4ALL, a nonprofit that offers AI education to high school students from underrepresented backgrounds.

She has argued that who builds AI shapes what it does and who it serves, and that the field cannot afford to draw from a narrow pool of people. In 2023, she published a memoir, “The Worlds I See: Curiosity, Exploration, and Discovery at the Dawn of AI,” reflecting on her journey and her views on the field’s direction.Her title, the Godmother of AI, came not from self-promotion but from recognition by others of what ImageNet made possible. Without a way for machines to see the world at scale, much of modern AI would have taken longer to arrive, or might have arrived differently. Li built that foundation, and in doing so, she helped shape the technology that now looks back at us from every camera and screen.Sources: Encyclopaedia Britannica (Fei-Fei Li); Dinis Guarda, “The AI Godfather Who Stayed in the Wilderness for 20 Years,” Medium/Wisdomia.ai, January 2026; Tom Eck, “Getting to Know The Godfathers of AI,” Medium, October 2025.