Google is in talks with Marvell Technology to develop new versions of its custom artificial intelligence (AI) processors, a report by The Information has claimed. Following the news, the chipmaker’s shares jumped 5.5% in early trading on Monday (April 20).

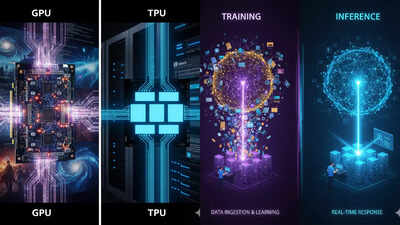

While the move signals a expansion of Google’s AI hardware, it has reportedly sparked concern for its long-time partner, Broadcom.The report said that Google is looking to Marvell to help create a specific type of chip known as an ASIC (Application-Specific Integrated Circuit), which is custom-built for one job. Industry sources told the publication that Google wants Marvell to focus on chips designed for AI inference – a process where an AI model actually generates an answer or result for a user.

This chip ensures that Google makes its AI services faster and much cheaper to run at a massive scale.

What this means for Broadcom

For years, Broadcom has been the main partner for Google’s famous Tensor Processing Units (TPUs). This new potential deal with Marvell may sounds like a threat, but reportedly Broadcom’s position isn’t in immediate danger.This is because Google and Broadcom recently signed an agreement solidifying their partnership through 2031.

However, with Marvell into the fold, Google can gain leverage by pushing back against Broadcom’s fees and ensure it isn’t reliant on a single company for its AI “brains”.Google has reportedly opened doors for companies to rivals, allowing them to train their models on its specialised TPUs. These companies are said to include Meta and Apple – which has opted Google for its Revamped Siri. Meanwhile, Anthropic announced earlier this month that it has “signed a new agreement with Google and Broadcom for multiple gigawatts of next-generation TPU capacity that we expect to come online starting in 2027.”A pact with Marvell for chips will also help Google to build a diverse arsenal of hardware to keep its Gemini AI competitive against rivals like Microsoft and OpenAI. The search for specialised chips comes as the ‘AI arms race’ moves into a more expensive phase. As millions of users interact with AI daily, the cost of electricity and computing power is skyrocketing.